Every day, thousands of creators watch their posts vanish into algorithmic silence without a single warning or explanation. Platforms use sophisticated detection systems to identify and suppress content they flag as problematic, duplicate, or policy-adjacent, and most creators never even realize it's happening to them. Detection evasion has evolved from a niche technical workaround into a real survival strategy for influencers and content managers trying to protect their reach. This article breaks down what detection evasion actually means, how it works in practice, what risks come with it, and where the line between privacy protection and genuine harm sits.

Table of Contents

- Understanding detection evasion: What it really means

- Core mechanics of detection evasion: How it works

- Key vulnerabilities and edge cases: What most creators miss

- Risks, controversies, and platform countermeasures

- The uncomfortable truth: Evasion is survival, but not a solution

- Make your content untraceable: Next steps for creators

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

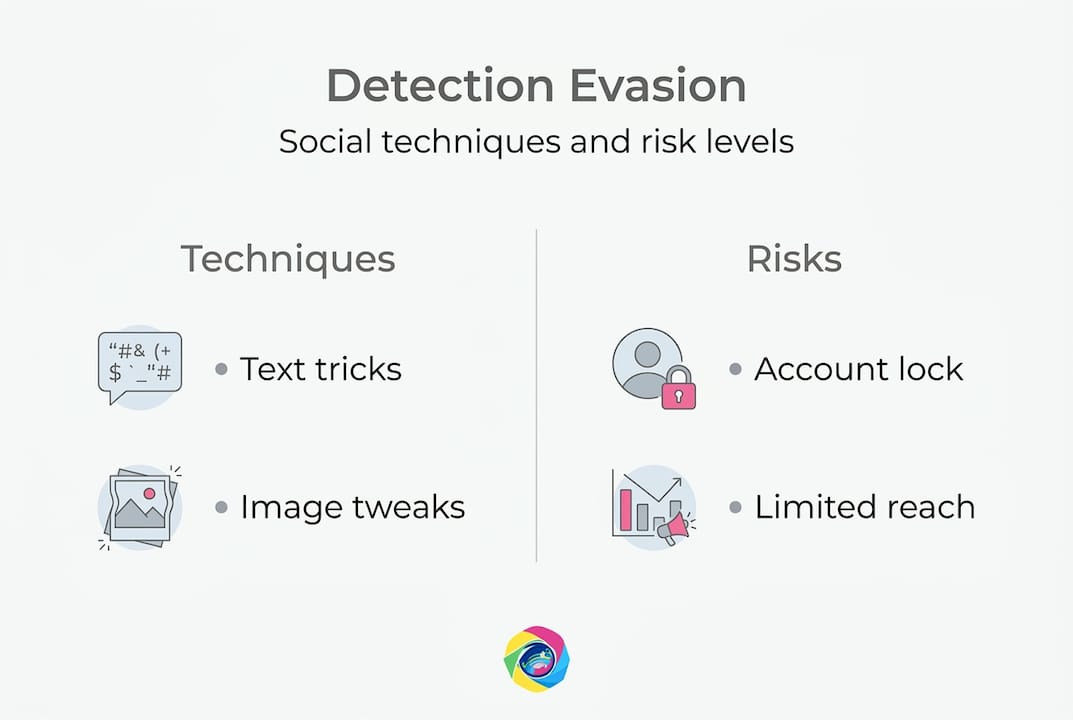

| Detection evasion demystified | Creators use coded language and tactics to circumvent social platform suppression for privacy and reach. |

| Techniques and effectiveness | Obfuscation, emoji swap, and format change are top methods, but effectiveness varies as platforms advance. |

| Risks and consequences | Evasion can protect content, but misuse may result in account bans or ethical concerns. |

| Arms race insight | Platforms and creators are locked in a cycle of evolving detection and evasion strategies. |

Understanding detection evasion: What it really means

The term gets thrown around loosely, so let's anchor it properly. Detection evasion on social media refers to techniques used to circumvent platform algorithms and moderation systems designed to identify and suppress policy-violating content, bots, spam, duplicates, or low-quality posts. That definition covers a wide range of tactics, from subtle image tweaks to elaborate account strategies, and understanding the full scope helps you make smarter decisions as a creator.

For most creators, the problem isn't malicious intent. It's visibility. Platforms like Instagram, TikTok, and YouTube use automated systems to scan every piece of content before it reaches your audience. These systems look for patterns: repeated images, flagged keywords, behavioral signals that suggest bot activity, or metadata linking posts to the same device. When those systems trigger, your content gets suppressed, sometimes permanently, without any human ever reviewing it.

Common content categories that face suppression include:

- Duplicate or near-duplicate images posted across multiple accounts or platforms

- Sensitive topic posts even when they don't violate any specific policy

- Content from new or flagged accounts with low trust scores

- Posts with certain keywords in captions, even when used in an educational or neutral context

- Images carrying identifying metadata that links them to previously penalized accounts

The controversy here is real. On one hand, these detection systems protect platforms from spam, scams, and genuinely harmful content. On the other hand, they frequently catch legitimate creators in the crossfire, suppressing political commentary, health information, adult content from legal creators, and minority voices discussing sensitive lived experiences.

"The challenge for creators is that these systems aren't transparent. You often don't know you've been flagged until your reach collapses, and appeals processes are slow or nonexistent."

That opacity is exactly why safeguarding social media accounts has become a proactive concern rather than a reactive one. Creators who wait until suppression hits lose weeks of momentum. Understanding the system before it flags you gives you options.

The other important distinction to make here is between evasion for privacy and evasion for harm. Most creators using these techniques are trying to protect their reach and their private data, not spread misinformation. But the same tools and tactics can be misused, and that dual-use reality shapes every ethical and legal conversation around this topic.

Core mechanics of detection evasion: How it works

With the basics clarified, we can explore the actual tactics creators use to stay visible in an increasingly aggressive moderation environment.

At the text level, core mechanics include text obfuscation such as algospeak, leetspeak, homoglyphs, and emoji substitutions. Terms like "unalive" (used instead of suicide), "seggs" (for sex), and "cornucopia" (for corona) emerged organically from creator communities trying to discuss sensitive topics without triggering keyword filters. Character manipulation, like swapping a standard letter for a visually identical Unicode character, also bypasses basic keyword matching. These are low-tech but surprisingly effective against older moderation systems.

At the image and account level, the tactics become more layered. Creators commonly use a numbered set of approaches:

- Metadata scrubbing to remove location, device, and timestamp data from image files before posting

- Image spoofing to generate visually distinct versions of the same image, defeating hash-based duplicate detection

- Account warming to build a posting history and trust score before introducing sensitive content

- Behavioral spacing to avoid posting in patterns that flag bot-detection algorithms

- Platform-specific format adjustments like changing aspect ratios, color grading, or file formats to break fingerprinting

Here's a comparison of common evasion techniques, their general effectiveness, and the risk level creators should expect:

| Technique | Effectiveness | Risk level | Best use case |

|---|---|---|---|

| Text algospeak | Moderate | Low | Sensitive topic captions |

| Emoji substitution | Moderate | Low | Keyword filter bypass |

| Metadata removal | High | Low | Cross-platform image posting |

| Image spoofing | High | Low to medium | Multi-account posting |

| Account warming | High | Medium | New account trust building |

| Coordinated distribution | High | High | Large-scale campaigns |

For creators managing multiple accounts or posting across platforms, anonymizing social images is one of the most practical and low-risk moves available. Metadata embedded in your image files can link a post on one account to a post on another, even if the images look completely different on screen.

Pro Tip: Use format and obfuscation changes sparingly and inconsistently. Algorithms are trained to detect patterns, so if you always use the same swap or always post at the same interval, the pattern itself becomes a signal. Rotate your approaches regularly and vary your timing.

One thing creators consistently underestimate is how quickly platforms update their detection models. A technique that works today may be flagged within weeks as platforms retrain their classifiers on new evasion examples. This is why understanding the mechanics matters more than memorizing specific workarounds. Knowing why something works lets you adapt when it stops working.

Key vulnerabilities and edge cases: What most creators miss

Having covered practical methods, it's crucial to address the edge cases and risks that trip up even experienced creators.

The most sophisticated evasion strategies go beyond simple text or image tweaks. Advanced tactics include account priming (building a reputation before introducing flagged behavior), coordinated evasion through distributed actions spread across many accounts, and context splitting where problematic signals are divided across multiple messages so no single post triggers a filter.

Research into how detection systems actually perform paints a humbling picture of platform capabilities. According to recent empirical analysis, bot detection using ML models achieves an impressive ROC-AUC score of 0.96 on benchmark datasets like Cresci-2017, suggesting strong theoretical performance. However, real-world evasion using adversarial techniques like GradEscape achieves a 61.7% bypass rate on commercial detectors, which means that well-designed evasion still works more than half the time in practice.

That gap between benchmark performance and real-world effectiveness is one of the most important things creators can understand. Platforms advertise their moderation as airtight, but actual evasion success rates tell a different story.

Here's what the data looks like across different detection scenarios:

| Detection target | Best model accuracy | Effective evasion rate | Key vulnerability |

|---|---|---|---|

| Duplicate image detection | Very high | Moderate (with spoofing) | Perceptual hash limits |

| Bot behavior detection | ~96% AUC (benchmark) | ~61.7% bypass (adversarial) | Training data gaps |

| Keyword/text filters | High for exact matches | High for obfuscation | Context understanding |

| Metadata fingerprinting | High | High (with scrubbing) | Relies on file metadata |

The vulnerabilities that creators most often overlook include:

- Behavioral consistency: Posting patterns, like-timing, and follow-follow ratios can flag accounts even when content is clean

- Device fingerprinting beyond metadata: Browser type, screen resolution, and IP patterns can link accounts independently of image metadata

- Cross-platform data sharing: Some platforms share signals, meaning a flag on one platform can influence your standing on another

- Adaptive detection models: ML classifiers retrain continuously, so evasion techniques have shorter shelf lives than most creators expect

- Context collapse: What evades detection on a text level may still get caught by image recognition, video analysis, or linked account behavior

One pattern worth highlighting is the vulnerability of newer AI-based moderation models to adversarial input. Large language model based classifiers, which platforms increasingly use to understand context and nuance, are actually more susceptible to certain adversarial perturbations than older rule-based systems. This counterintuitive finding means that as platforms get "smarter," they also introduce new blind spots.

Staying ahead of these edge cases requires a layered approach to social account security, not just a single tactic applied once and forgotten. The creators who maintain consistent visibility treat privacy and detection management as an ongoing practice, not a one-time fix.

Risks, controversies, and platform countermeasures

The advanced tactics bring up critical ethical challenges and countermeasures that every creator needs to understand before they choose to use any evasion strategy.

The relationship between creators and platforms is essentially an arms race, and it escalates fast. When creators develop a new evasion tactic, platforms respond by updating their classifiers. When platforms tighten their filters, creators adapt with more sophisticated obfuscation. This cycle repeats continuously, and each escalation raises the stakes for everyone involved.

Evasion aids privacy and risk reduction for legitimate creators, but the same techniques enable misinformation campaigns, scam operations, and coordinated harassment. Platforms counter with multimodal AI systems that analyze text, image, audio, and behavioral signals simultaneously, as well as human review queues for borderline cases. However, they still lag behind in nuanced situations, like detecting sarcasm, culturally specific language, or context-dependent content.

The risks creators face when using evasion tactics fall into several categories:

- Account termination if platforms detect coordinated or systematic evasion behavior

- Shadow suppression escalation where accounts that evade once get placed on permanent watchlists

- Legal exposure in jurisdictions where certain evasion tactics violate platform terms of service agreements that carry legal weight

- Reputational damage if evasion tactics become public and audiences perceive the creator as deceptive

- Accidental harm when evasion methods normalize language or imagery that could be misused by bad actors

"The creators most at risk are often the ones who didn't realize they were evading anything. They just used a common workaround they saw others use, and then faced serious consequences when platforms updated their policies."

Platform countermeasures are becoming increasingly sophisticated. Beyond basic keyword filtering, major platforms now deploy:

- Perceptual hashing to identify visually similar images even after cropping, filtering, or minor edits

- Behavioral graph analysis to map relationships between accounts and detect coordinated activity

- Multimodal classifiers that examine images, captions, hashtags, and posting behavior as a combined signal

- Fingerprinting through metadata embedded in images, videos, and even audio files

This is why image anonymization needs to address the full digital footprint of an image, not just the visible content. Stripping visible watermarks is not enough if the underlying file still carries device identifiers or location data.

Pro Tip: Before using any evasion tactic, ask yourself two questions. First: does this protect my legitimate privacy, or does it help me do something the platform would rightfully prohibit? Second: if this tactic became public, would my audience understand and respect my reasoning? Ethical self-auditing is not just a moral exercise; it's practical risk management.

The uncomfortable truth: Evasion is survival, but not a solution

Here's what the industry rarely says out loud: detection evasion is largely a symptom of failed trust between creators and platforms, not a sustainable strategy.

Creators should not have to become cybersecurity practitioners just to maintain organic reach on content they've worked hard to produce. The fact that legitimate voices need to obfuscate their captions or scrub their image metadata just to avoid suppression reveals a serious flaw in how platform moderation is designed and applied.

The detection arms race is exhausting for everyone involved. Creators use obfuscation and perturbations, platforms advance to fingerprint consistency checks, and edge cases like adaptive coordinated behavior still hit a maximum 58% F1 accuracy in detection. That's barely better than a coin flip for the most advanced scenarios, and it exposes how much of this system operates on approximation, not precision.

We believe the real long-term fix requires platforms to invest in transparent moderation with appeals processes that work, contextual understanding that doesn't penalize ambiguity, and genuine collaboration with creator communities. Until that happens, evasion remains a tool creators must understand, not because it's ideal, but because the alternative is invisible.

Use it thoughtfully, use it ethically, and never stop pushing for the systemic accountability that would make it unnecessary.

Make your content untraceable: Next steps for creators

For creators wanting to apply these strategies with privacy tools, here's a concrete next step.

Understanding detection evasion is only half the battle. Applying it consistently, safely, and at scale is where most creators hit a wall. Manual metadata scrubbing is tedious. Creating multiple unique image variations by hand takes hours. And doing it wrong, leaving even a fragment of identifying data, can undo everything you're trying to protect.

One2Many.pics is built specifically for creators who need to post the same or similar visual content across multiple accounts and platforms without leaving a traceable digital footprint. The platform strips all identifying metadata, generates visually unique image variations, and lets you download clean, ready-to-post files in bulk. Whether you're managing one account or twenty, untraceable social media images are now a straightforward part of your workflow. Creators looking to grow their reach while helping others can also explore creator affiliate options that reward you for bringing the community along.

Frequently asked questions

What is detection evasion on social media?

Detection evasion is the use of tactics to circumvent algorithms and moderation systems that identify and suppress content, letting creators maintain reach and visibility. As defined by platform researchers, detection evasion covers everything from text obfuscation to behavioral pattern manipulation.

Is detection evasion legal or against platform rules?

Most platforms explicitly prohibit systematic evasion tactics in their terms of service, and enforcement varies widely depending on the platform and the specific tactic used. Creators should review each platform's policies carefully before deploying any evasion strategy.

What are common detection evasion methods?

Text obfuscation, emoji substitutions, character manipulation, and format changes are the most widely used methods for bypassing keyword filters and ML classifiers.

Are detection evasion strategies effective against modern AI moderation?

Some strategies, particularly adversarial image and text perturbations, can still bypass newer AI systems, but platforms counter with multimodal AI and human review that close many gaps, though nuanced cases remain difficult to detect accurately.

Can detection evasion be used ethically?

Ethical use depends entirely on intent; evasion aids privacy and fair visibility for legitimate creators, but using the same techniques to spread misinformation, run scams, or coordinate harassment is unambiguously harmful and unethical.