Every image you post carries invisible data that can expose your location, device, and identity to anyone who knows where to look. Many creators are unaware that posted images can leak metadata and be scraped for AI training, leading to serious reputation and safety risks. This isn't a fringe concern. It's a daily reality for influencers, social media managers, and agencies posting at scale. This guide breaks down what image privacy actually means for creators, which threats are most dangerous, and exactly how to build a workflow that protects your reach, your brand, and your identity without sacrificing authenticity.

Table of Contents

- What image privacy means for creators

- Common threats: How your images are misused

- Core mechanics: Building privacy into every image

- Balancing privacy, reach, and authenticity

- Edge cases and advanced defense strategies

- Our take: What most privacy advice misses for creators

- Next steps: Simplify your image privacy workflow

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Image privacy is vital | Protecting photos from AI misuse, scraping, and leaks safeguards your reputation and reach. |

| Privacy requires balance | Overprotecting images can suppress visibility, so use nuanced tools like C2PA and smart watermarking. |

| Stay proactive | Monitor your images, update tactics, and act quickly if misuse occurs to stay ahead of evolving threats. |

| Practical workflows matter | Systems that automate privacy steps let you manage scale without sacrificing control or trust. |

What image privacy means for creators

Image privacy is not just about keeping photos off the internet. For creators, it's about controlling what information travels with your images, who can use them, and how platforms interpret them. Every JPEG or PNG you upload can carry hidden data fields called EXIF metadata, which store details like GPS coordinates, camera model, shooting time, and software version. Strip that data carelessly and algorithms may flag your content. Leave it in, and you're handing strangers a map to your life.

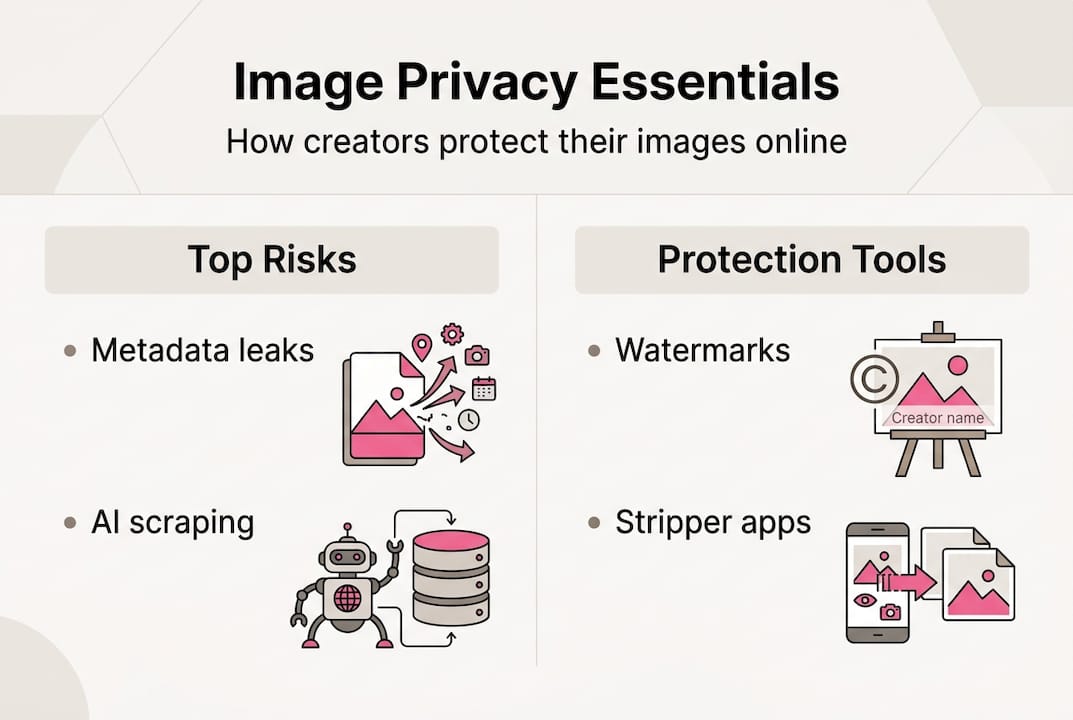

The risks fall into a few clear categories:

- Unauthorized AI scraping: Platforms and third parties harvest public images to train AI models, often without consent.

- Deepfakes and identity fraud: Your face or brand visuals get repurposed in fabricated content that damages credibility.

- Metadata leaks: Location and device info embedded in image files can reveal where you live, work, or travel.

- Algorithmic suppression: Platforms may downrank content that looks duplicated or stripped of provenance signals.

"Privacy isn't just about secrecy. For creators, it's about controlling the narrative around your brand and protecting the reach you've worked hard to build."

Understanding image anonymization basics is the starting point. Once you see how much data travels with a single upload, the urgency becomes clear. Creators who treat privacy as an afterthought are essentially publishing their digital fingerprint alongside every post. The good news is that social media security tips and the right tools can close most of these gaps without slowing down your content calendar. The ethical considerations for AI imagery are also worth understanding, because the legal and reputational stakes are rising fast. Building untraceable images into your workflow is no longer optional for serious creators.

Common threats: How your images are misused

Understanding privacy is one thing, but what concrete threats do images face after upload? The landscape is more hostile than most creators realize. Deepfake misuse and AI image scraping have surged in the past year, and the methods keep getting more sophisticated.

| Threat vector | Description | Example risk |

|---|---|---|

| AI scraping | Bots harvest public images for model training | Your likeness used in AI-generated ads without consent |

| Deepfakes | Manipulated media using your face or brand | Fake endorsements, fraudulent content |

| Metadata leaks | EXIF data exposes location and device info | Home address revealed via geotagged photo |

| Reverse image search | Anyone can find where your image appears online | Stolen content reposted without credit |

| Platform data mining | Platforms analyze uploaded images for behavioral data | Targeting profiles built without your knowledge |

The scariest threats are the invisible ones. A photo taken at home and posted without stripping GPS data can reveal your exact address to anyone who downloads it and checks the EXIF fields. No hacking required.

On the reputation side, C2PA content credentials are emerging as a standard for proving image authenticity and origin. Without them, your content has no verifiable provenance, making it easier for bad actors to claim ownership or misrepresent your work.

52% of users worry about brands using undisclosed AI-generated images, which means your audience is already skeptical. Misuse erodes that trust fast.

Pro Tip: Set up a reverse image monitoring alert using tools like TinEye or Google Images. Check monthly to catch unauthorized reuse early, before it spreads.

You can also review social image safeguards to layer your defenses across platforms and catch problems before they escalate.

Core mechanics: Building privacy into every image

Faced with these threats, how can creators reclaim control over their image assets? The answer is a three-phase model: prevent, monitor, and respond.

- Prevent: Strip sensitive metadata using tools like ExifTool (free, command-line) or browser-based platforms that handle bulk processing. Add visible watermarks for brand recognition and invisible watermarks for legal traceability.

- Embed credentials: Use C2PA-compatible tools to attach content credentials that prove origin without exposing personal data. This is more robust than a standard watermark because it survives platform compression.

- Monitor: Run periodic reverse image searches with TinEye or Pixsy to track where your images appear across the web.

- Respond: If misuse is found, file a DMCA takedown, document the evidence, and escalate to legal support if needed.

Comparing your options matters. Here's a quick breakdown:

| Tool | Best for | Privacy level | Ease of use |

|---|---|---|---|

| ExifTool | Bulk metadata stripping | High | Moderate (technical) |

| Online platforms | Quick single-image processing | Medium-High | Easy |

| C2PA credentials | Provenance and authenticity | High | Moderate |

| Visible watermark | Brand recognition | Low-Medium | Easy |

| Invisible watermark | Legal traceability | High | Moderate |

Creators should use tools to strip metadata, watermark images, and add C2PA content credentials with privacy options. The key is layering these methods rather than relying on just one.

Pro Tip: Don't strip metadata completely before you understand what each platform does with it. Some platforms use certain EXIF fields to verify authenticity. Selective stripping, removing location and device data while preserving creation date, is often smarter than a full wipe.

For a deeper walkthrough, the guide on how to anonymize images covers platform-specific nuances. And if you want to understand the C2PA privacy risks before committing to that standard, it's worth reading before you build it into your workflow.

Balancing privacy, reach, and authenticity

The path to protection isn't always straightforward. In fact, some privacy moves cut your reach. Stripping all metadata can flag content as a repost and risk algorithmic suppression, yet embedding C2PA with privacy options or subtle watermarks maintains provenance without triggering penalties.

Here's where creators have to make real trade-offs:

- Total metadata stripping protects privacy but can make content look like a duplicate or repost to platform algorithms.

- Full C2PA embedding provides strong provenance but may expose creator identity data if not configured carefully.

- Subtle watermarking balances protection and authenticity but offers less legal weight than invisible watermarks.

- AI content disclosure builds audience trust but requires consistent application across all platforms.

"Balancing privacy and distribution means sometimes less is more. A lighter touch on protection often outperforms aggressive stripping when it comes to algorithmic reach."

Audience trust is a real factor here. Most users prefer authenticity and transparency. Disclosing when content is AI-assisted, being clear about image origins, and avoiding deceptive editing all contribute to long-term brand equity. The AI ethics for marketers framework is a useful reference for setting internal standards.

The goal is a scalable privacy workflow that doesn't require you to choose between safety and visibility. That balance is achievable, but it requires deliberate choices rather than default settings.

Edge cases and advanced defense strategies

The basics cover most cases, but what about more complicated privacy challenges? Creators working with collaborators, featuring bystanders, or posting group content face a different set of risks.

Common edge cases include:

- Bystander inclusion: Posting images that include people who haven't consented to being photographed or identified online.

- Collaborative content: When multiple creators or brands contribute to an image, ownership and usage rights get murky fast.

- Underage subjects: Stricter legal protections apply, and platforms have specific policies around minors in content.

- Brand partnerships: Sponsored content images may be subject to contract clauses that restrict how and where they're used.

If your content is stolen or misused, here's a clear response sequence:

- Document everything: Screenshot the misuse with timestamps, URLs, and any identifying information about the account or site.

- File a DMCA report: Submit a takedown notice directly to the platform hosting the stolen content.

- Contact the platform: Use the platform's dedicated IP reporting tool in addition to the DMCA process.

- Preserve evidence: Save copies of all documentation in case legal escalation is needed.

- Consult legal support: For serious misuse, especially deepfakes or commercial fraud, involve an attorney familiar with digital IP.

Creators should ensure collaborations include signed agreements prohibiting AI training and know how to respond with DMCA or law enforcement if content is misused. A simple collaboration agreement template that explicitly addresses AI training, redistribution, and platform posting rights can prevent most disputes before they start.

For scenarios involving bystanders or sensitive subjects, image anonymization for edge cases offers practical techniques like blurring, cropping, and metadata management that keep you legally and ethically covered.

Our take: What most privacy advice misses for creators

Most privacy guides hand you a checklist and call it done. Strip metadata. Add a watermark. Done. But that approach misses the most important variable: how platforms actually respond to your images after you apply those protections.

Here's what we've seen working with creators at scale. Platforms are increasingly sophisticated at detecting images that have been aggressively sanitized. A photo with zero metadata, no provenance signals, and pixel-perfect duplication across accounts looks like spam to an algorithm, even if it's completely original content. Over-protection can hurt you just as much as no protection at all.

The smarter move is building a flexible, dynamic privacy strategy. That means using advanced privacy solutions that generate unique image variations, not just stripped copies. It means embedding just enough provenance to satisfy platform trust signals while removing the personal data that creates risk. And it means treating privacy as a workflow design problem, not a one-time technical fix.

Disclosure and context matter too. Audiences in 2026 are more aware of AI and image manipulation than ever. Creators who are transparent about their process, and who can prove the authenticity of their content when challenged, build stronger long-term trust than those who hide behind anonymity. Privacy and transparency aren't opposites. The best creators use both.

Next steps: Simplify your image privacy workflow

Ready to take tangible action? Here's how you can make untraceable image privacy standard for your brand.

Managing metadata stripping, watermarking, and content variation manually across dozens of posts is time-consuming and error-prone. That's exactly the problem one2many.pics is built to solve.

The platform lets you upload original images and generate multiple unique versions with metadata removed, visual variations applied, and privacy settings customized to your workflow. Whether you're managing one account or fifty, the bulk processing and automation features mean you can scale your content without scaling your risk. It's designed for creators who need real privacy, not just the appearance of it. Start protecting your images the smart way.

Frequently asked questions

What is image privacy in the context of social media?

Image privacy means protecting your photos from unwanted tracking, scraping, and misuse by managing what metadata, credentials, and watermarks you include. It covers everything from guarding against scraping to preventing AI deepfakes.

How do I prevent my social images from being used in AI training?

Strip metadata, add visible and invisible watermarks, and use C2PA credentials to hinder unauthorized use. You should also monitor with reverse search tools like TinEye or Pixsy to catch misuse early.

Can stripping all metadata hurt my social media reach?

Yes. Aggressive stripping can trigger platform algorithms to flag your content as reposted or spammy, which suppresses distribution. Selective stripping is usually the better approach.

What should I do if my image is stolen or misused?

File a DMCA report with the platform, document all evidence with timestamps and URLs, and consider legal steps if the misuse involves commercial fraud or deepfakes.