Your engagement numbers drop suddenly, your hashtags stop gaining traction, and your posts seem invisible to new audiences. Yet your account is still live, no warning has arrived, and the platform says nothing. This silent suppression affects far more creators than most realize, and it can quietly destroy months of growth without a single notification. Understanding what triggers these penalties, how platforms enforce them, and what you can do to protect your content is the difference between a thriving channel and a stagnant one. This guide breaks down every layer of that process so you can take back control.

Table of Contents

- What are social media penalties and why do they matter?

- How do platforms detect and penalize accounts?

- Suppression vs. bans: What most creators misunderstand

- Privacy-first practices to reduce penalty risk

- The uncomfortable truth: Suppression is here to stay, so adapt

- Protect your creative reach with privacy-focused tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Shadow penalties reduce reach | Most creators experience content demotion without being notified, harming growth. |

| Detection is algorithmic | Platforms use behavioral signals to silently filter or demote accounts and posts. |

| No appeal for demotions | Only behavior changes can restore visibility—support tickets don't help with suppression. |

| Proactive privacy matters | Using privacy-focused publishing practices greatly lowers penalty risk. |

| Adapt to ongoing suppression | Creators should build flexible, privacy-first strategies to future-proof their presence. |

What are social media penalties and why do they matter?

Social media penalties are not always the dramatic account suspensions or permanent bans most creators picture. In reality, the most common penalties are far quieter and far more damaging over time. They include shadowbanning, visibility filtering, and algorithmic content demotion. Your account stays active, your posts go up as usual, but the platform silently limits who sees them.

Understanding these penalties is critical because they directly cut into your reach, your brand value, and your revenue potential. A creator who loses 60 percent of their organic reach without knowing why will make worse decisions, not better ones. They might post more aggressively, trying to compensate, which often triggers additional penalties. Understanding platform penalty impacts early is what separates creators who grow sustainably from those who plateau and burn out.

Common types of penalties you need to know include:

- Shadowbanning: Your content exists but is hidden from non-followers and search results

- Visibility filtering: Platform limits distribution in feeds, replies, and recommendations

- Content demotion: Posts are shown to fewer people algorithmically, with no notification

- Hashtag suppression: Specific hashtags stop surfacing your content in discovery

- Engagement throttling: Platform reduces how often your posts appear in algorithmic recommendations

"X (Twitter) applies visibility filtering based on behavioral signals like high follow/unfollow velocity, rapid posting, repetitive content, engagement bait, high block/mute rates, and banned hashtags, reducing tweets in search, replies, and recommendations."

Most creators experience these penalties without any formal warning or explanation. The absence of notification is intentional. Platforms frame these measures as quality protection for users, not punishments for creators. But the result is the same: your content reaches fewer people, and you may not realize it for weeks. That's why building privacy for creators into your workflow from day one matters so much more than reacting after the damage is done.

How do platforms detect and penalize accounts?

With the basics established, the next step is to see how these penalties are triggered and enforced. Platforms use sophisticated, multi-layered detection systems that run constantly in the background. These systems analyze behavioral signals, scan content patterns, and monitor engagement metrics to score every account and every piece of content.

Here's a breakdown of the most common penalty triggers platforms watch for:

| Trigger | Platform behavior | Risk level |

|---|---|---|

| High follow/unfollow velocity | Signals inauthentic growth tactics | High |

| Repetitive or duplicate content | Flags spam-like posting patterns | High |

| Engagement bait phrases | Detects artificial interaction prompts | Medium |

| Banned or restricted hashtags | Reduces distribution across the platform | High |

| Rapid posting frequency | Interpreted as bot-like activity | Medium |

| High block/mute rates | Indicates content unwanted by users | High |

| Identical metadata across posts | Reveals automation or cross-posting tools | Medium |

When detection systems identify enough risk signals, the platform responds in a sequence that most creators never see clearly. Here is how platform detection methods typically play out:

- Signal accumulation: The system registers multiple behavioral indicators over days or weeks

- Risk scoring: Your account or individual post receives an internal trust score adjustment

- Soft filtering: Distribution is quietly reduced in feeds and search results

- Extended suppression: If signals continue, demotion becomes more severe and longer-lasting

- Review flagging: In extreme cases, content is sent to human review for further action

One crucial thing to understand: suppression is probabilistic, based on signals over time, with no appeals process for algorithmic demotions unlike formal suspensions. Recovery relies on behavioral change, not support tickets. This means filing a report to the platform help center after being shadowbanned will accomplish almost nothing. The system that suppressed you is automated, and it will only re-evaluate your account when it detects consistently cleaner signals.

Understanding compliance rules on each platform gives you the clearest roadmap for what to avoid and what to prioritize.

Pro Tip: Run a quarterly audit of your posting patterns. Review follow/unfollow ratios, posting frequency, hashtag lists, and engagement bait language. Cleaning up these signals before they accumulate is far easier than reversing a suppression penalty after the fact.

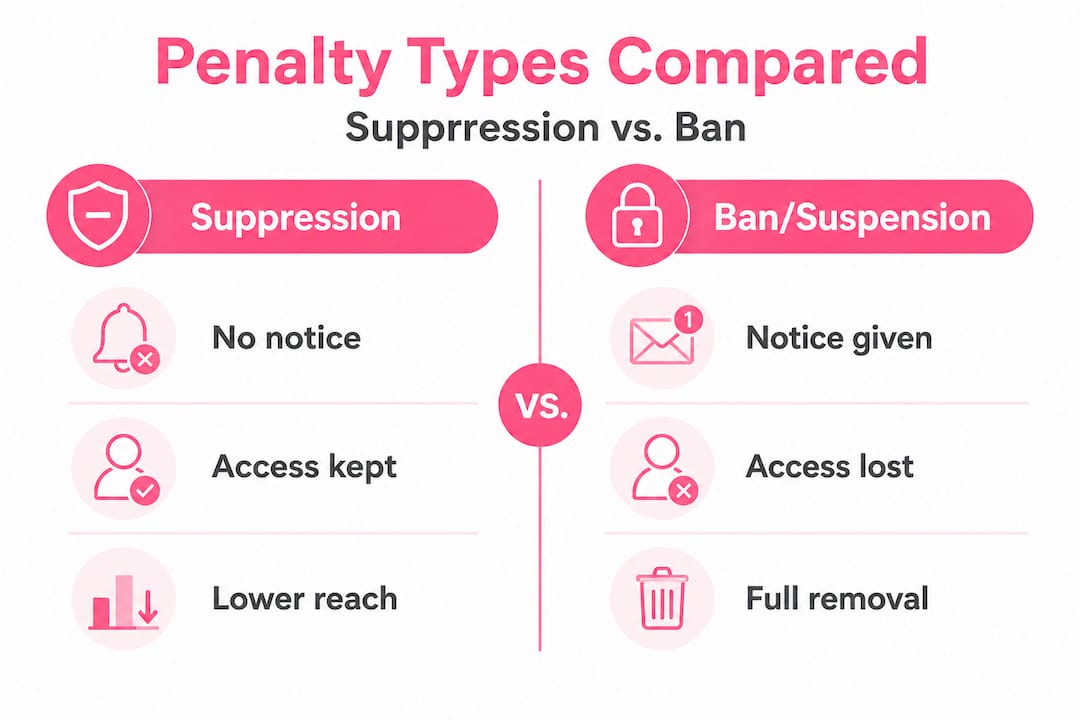

Suppression vs. bans: What most creators misunderstand

A clear understanding of enforcement types helps creators adjust their strategies intelligently. The single biggest misconception creators carry is that if they haven't been banned, everything is fine. In reality, the most impactful penalties are the ones you never see coming.

Here's how suppression compares to outright bans:

| Factor | Suppression | Ban/Suspension |

|---|---|---|

| Notification | None | Usually yes |

| Account access | Retained | Removed |

| Appeal process | Not available | Often available |

| Visibility | Severely reduced | Eliminated |

| Recovery method | Behavioral change | Appeal or new account |

| Timeline | Weeks to months | Immediate |

Many creators operate under suppression for weeks or months without realizing it. They assume low engagement means their content isn't resonating creatively. They change their visual style, try new formats, or post at different times, none of which addresses the actual problem. This is a costly misdiagnosis that wastes both time and creative energy.

The tension between platforms and creators over suppression is real and growing. Platforms frame these systems as quality filters designed to protect users from spam, misinformation, and low-value content. But from a creator's perspective, the opacity feels deeply unfair. The stakes are also rising at a policy level: regulatory fines like X's €120M DSA penalty highlight the growing tension between platform moderation practices and free speech expectations.

Common misconceptions creators hold about suppression include:

- "If I'm suppressed, I'd know about it": Platforms almost never notify creators of algorithmic demotion

- "It only happens to spammers": Even legitimate creators trigger penalties through unknowing pattern violations

- "Better content will fix it": Quality alone won't override behavioral risk signals already in the system

- "One viral post will reset everything": Algorithmic trust scores recover slowly, not instantly

- "Posting more will make up for low reach": Higher volume often signals the exact behaviors that triggered suppression

The most effective defense is to learn the evasion and privacy techniques that reduce your risk profile before penalties accumulate. Monitor your engagement metrics weekly. A sudden, consistent drop in reach that doesn't match your content quality is your clearest warning signal that something has shifted algorithmically.

Privacy-first practices to reduce penalty risk

With your understanding of penalty mechanisms clarified, the next step is taking proactive action. Privacy-first practices aren't just about keeping personal data safe. They're about reducing the signals that trigger algorithmic suppression in the first place. The two goals are deeply connected.

Here are the core steps every creator should build into their workflow:

- Use platform-native tools exclusively: Posting through official apps and APIs instead of third-party automation tools removes a significant source of suspicious behavioral signals

- Avoid linking to sensitive or flagged websites: Direct links to restricted content categories can trigger content demotion instantly, particularly in bio fields and post captions

- Verify compliance before publishing: Check that your content meets current platform rules on age-appropriate content, misleading claims, and engagement tactics before posting

- Remove revealing metadata from images: Location data, device information, and timestamps embedded in images can be used to fingerprint accounts and link multiple profiles

- Build and maintain an email list: A direct audience channel lets you reach your community completely outside algorithmic control, which is your most reliable fallback when platforms suppress your content

The regulatory landscape is shifting in ways that affect how platforms apply suppression. Privacy-based rules like the Safe Kids Act are pushing platforms to apply stricter filtering to content connected with behavioral manipulation and addictive feed design. Creators who aren't aware of how these policies shape detection algorithms are at increasing risk of unintentional violations.

Pro Tip: Build your email list consistently, even when platform reach is strong. An algorithm change or suppression event can happen without warning. Your email list is the one audience asset you fully own and control, and it bypasses every platform filter entirely.

Staying ahead of these risks requires ongoing effort. Privacy-focused posting tips give you a concrete framework to keep your content clean and your reach stable. Combined with privacy tools designed for creators, you can significantly reduce your vulnerability to the behavioral signals that trigger suppression.

One area most creators overlook entirely is protecting image privacy. When you post the same image, or even similar images, across multiple accounts or platforms, embedded metadata creates a traceable fingerprint. Platforms use this fingerprint to link accounts and apply penalties across all of them simultaneously. Stripping and varying that metadata is one of the simplest and most effective suppression prevention steps available.

The uncomfortable truth: Suppression is here to stay, so adapt

Here's what no quick-fix guide will tell you directly: algorithmic suppression isn't a glitch that will get patched in the next platform update. It's a deliberate feature. Platforms benefit from controlling content distribution. Suppression gives them influence over what gets amplified and what quietly disappears, all without the public relations cost of visible bans.

Filing support tickets after demotion is a waste of time. The systems that suppress content are not monitored by support teams. Recovery depends entirely on behavioral change over time, not on convincing a platform representative that you deserve better reach. The creators who recover fastest are those who identify the problematic signals quickly and eliminate them, not those who argue their case most eloquently.

The real long-term solution is building an adaptable content strategy that anticipates platform opacity. This means creating content variations for reach so that duplicate detection systems don't flag your multi-platform posting. It means growing direct audience channels so that platform suppression never fully cuts you off from your community. And it means treating privacy as infrastructure, not an afterthought.

The creators who thrive long-term are those who understand that platforms will always prioritize their own interests. Your job is to stay one step ahead by focusing on what you can actually control: your content signals, your privacy practices, and your direct audience relationships. Expect opacity, track your own data carefully, and adapt before the platform forces you to.

Protect your creative reach with privacy-focused tools

As you put these lessons into practice, having the right tools makes the difference between guessing and executing with confidence.

One2Many.pics was built specifically for creators who want to protect their reach and avoid the suppression triggers that come from repetitive or metadata-rich content. The platform transforms your original images into multiple unique variations, stripping out location data, device info, and timestamps while creating visual diversity that bypasses duplicate detection algorithms. Whether you're managing one account or scaling across a network, these tools give you real protection against the signals that trigger platform penalties. You can also explore the affiliate privacy tools program to share these solutions with your own audience while earning in the process.

Frequently asked questions

How can I tell if my account or posts are being suppressed?

Look for consistent drops in engagement and reduced visibility in searches or hashtags, since platforms rarely notify you directly. X's visibility filtering reduces tweet reach in search, replies, and recommendations based on behavioral signals, which is a pattern common across most major platforms.

Is there a way to appeal or reverse content suppression?

No official appeal exists for suppression; the most effective recovery method is changing your posting behavior to reduce risky signals. Suppression is probabilistic and algorithmic, meaning recovery requires consistent behavioral improvement rather than a platform support response.

Which privacy steps reduce the risk of social media penalties?

Use platform-native tools, avoid direct links to sensitive websites, verify compliance, and build email lists to reach your audience directly. Regulatory frameworks like the Safe Kids Act are pushing platforms toward stricter filtering, making proactive compliance even more important for creators in 2026.

Why do platforms use content suppression instead of direct bans?

Suppression allows platforms to quietly filter content they consider low-quality or risky while avoiding public pushback over outright bans. Regulatory tensions like X's €120M DSA fine show why platforms prefer silent filtering over visible enforcement, since the political cost of overt censorship continues to grow.