AI tools can generate thousands of images in minutes, but that speed creates a false sense of security for creators and marketers. Platforms like Instagram and Meta have made it increasingly clear that volume alone does not equal reach. Suppression of unoriginal content is becoming a real consequence for accounts that skip meaningful transformation. This guide cuts through the noise to show you exactly what makes an image truly unique, why it matters for both reach and privacy, and how to build a scalable workflow that actually works.

Table of Contents

- Why unoriginal images face suppression

- What makes an image "unique" on social platforms

- How diffusion models create distinctive images

- Building a brand-safe workflow for unique visuals

- Best practices for validating and iterating image outputs

- What most guides miss about unique image generation

- Tools to drive your unique image workflow

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Suppressions target unoriginal content | Major platforms penalize duplicate and superficial edits, so meaningful transformation is essential for visibility. |

| Diffusion models enable uniqueness | Modern image generation relies on controllable diffusion models for scalable, distinctive outputs. |

| Brand safety needs editorial input | Human creativity and IP-aware workflows are vital alongside AI generation for legal and reputational protection. |

| Validation is key for reach | Regular benchmarking and output testing help ensure images perform well and adhere to platform rules. |

| Balance AI and real photos | Mixing authentic and AI visuals enhances engagement and audience trust. |

Why unoriginal images face suppression

The conversation around image penalties on social platforms has shifted dramatically. A few years ago, copyright infringement was the main concern. Today, platforms are taking a much harder stance on what they call "unoriginal" content, even when no copyright is technically violated.

Instagram and Meta have updated their ranking signals to actively deprioritize reposts and images that lack meaningful transformation. This is not just about obvious copy-paste reposts. Accounts that share lightly filtered versions of trending images, screenshot compilations, or bulk AI outputs that all look suspiciously similar can all trigger suppression. The penalties are subtle but serious: reduced distribution, limited explore page visibility, and, for repeat offenders, account-level demotions that are very hard to recover from.

Here is what actually triggers suppression on major platforms:

- Reposting without transformation: Sharing another creator's image, even with credit, without adding significant visual change

- Aggregator patterns: Accounts that consistently publish content sourced from other creators get flagged algorithmically, not just post by post

- Metadata fingerprinting: Platforms read embedded file metadata including timestamps, device info, and GPS coordinates to identify identical or near-identical images

- Batch similarity: Uploading multiple images from the same AI session can expose patterns that flag your account as an automated aggregator

"Unique image generation helps creators reduce suppression risk on social platforms, but only when the variation is meaningful, not cosmetic."

The distinction matters because many creators believe they are safe just because they used AI to make something "new." That is a dangerous assumption. Understanding unique images and engagement helps clarify why transformation has to be substantive. Platforms now use platform duplicate detection at a precision level that catches near-matches, not just exact copies.

What makes an image "unique" on social platforms

Not all edits are created equal. There is a meaningful gap between superficial adjustments and transformations that platforms actually recognize as original content. Understanding this gap is central to any smart posting strategy.

Platforms assess originality across several dimensions: the subject matter itself, the composition and framing, the visual style, and the overall account behavior pattern. A brightness tweak or a color filter does not move the needle. Even swapping a background while keeping the exact same subject pose and composition is often still flagged. As Instagram has signaled directly, they actively down-rank aggregator behavior and superficial edits.

Here is a clear breakdown of what counts as superficial versus meaningful transformation:

| Edit type | Platform perception | Risk level |

|---|---|---|

| Brightness or contrast adjustment | Superficial | High |

| Adding a text overlay to a stock image | Superficial | High |

| Color filter swap | Superficial | High |

| Full recomposition with new subject angle | Meaningful | Low |

| New style, mood, and lighting via AI | Meaningful | Low |

| Metadata stripped with unique visual variation | Meaningful | Low |

| Background swap on identical foreground | Borderline | Medium |

The takeaway is that meaningful transformation has to touch multiple elements simultaneously. Changing just one variable, like color, rarely qualifies. Changing composition, subject focus, color palette, and mood together creates something that algorithms and human reviewers recognize as genuinely different.

Smart creators who focus on modifying images for reach understand that every variable in an image is a lever. Pulling multiple levers at once is the only reliable way to pass platform originality checks. Building content variation strategies around this principle separates accounts that scale from those that stall.

Pro Tip: Always diversify your prompts at the concept level, not just the style level. Start with different creative briefs for each image batch. If all your prompts describe the same scene with slight word changes, your outputs will cluster visually and trigger similarity flags.

How diffusion models create distinctive images

Modern AI image generation relies heavily on diffusion models. These are systems that learn to reverse a process of gradually adding noise to images until they can reconstruct high-quality visuals from pure randomness. The quality and uniqueness of the output depend heavily on how you configure the generation process.

Diffusion model research shows that settings like guidance scale, sampling steps, and seed values directly control the balance between prompt fidelity and creative variation. A high guidance scale keeps the output tightly aligned with your prompt but can make multiple generations look eerily similar. A lower guidance scale adds more randomness, which helps with visual diversity but can drift from your intended concept.

Here are the core steps to optimize diffusion model settings for your branding needs:

- Set a unique seed for every generation. Seeds are the numerical starting point for the randomness process. Using the same seed repeatedly produces near-identical images even with different prompts.

- Vary your sampling steps between 20 and 50. Fewer steps generate faster but less refined images. More steps add detail. Rotating between ranges keeps outputs visually distinct.

- Adjust guidance scale by use case. Use higher values (7 to 10) for product shots where accuracy matters. Use lower values (4 to 6) for lifestyle and editorial content where variety is more valuable.

- Change the base model or checkpoint regularly. Different model checkpoints produce visually distinct aesthetic signatures even with identical prompts.

- Test in small batches before scaling. Run five to ten images per setting configuration and review for clustering before generating at volume.

One important truth that benchmark studies confirm is that no single AI image generator wins across all use cases. Some tools excel at photorealism. Others handle text in images better. Still others are stronger for stylized or branded content. The practical takeaway: test your tools against your actual content category, not against generic industry rankings.

A useful resource for understanding how these variations translate to real posting scenarios is this guide to image variations, which covers scale and protection simultaneously.

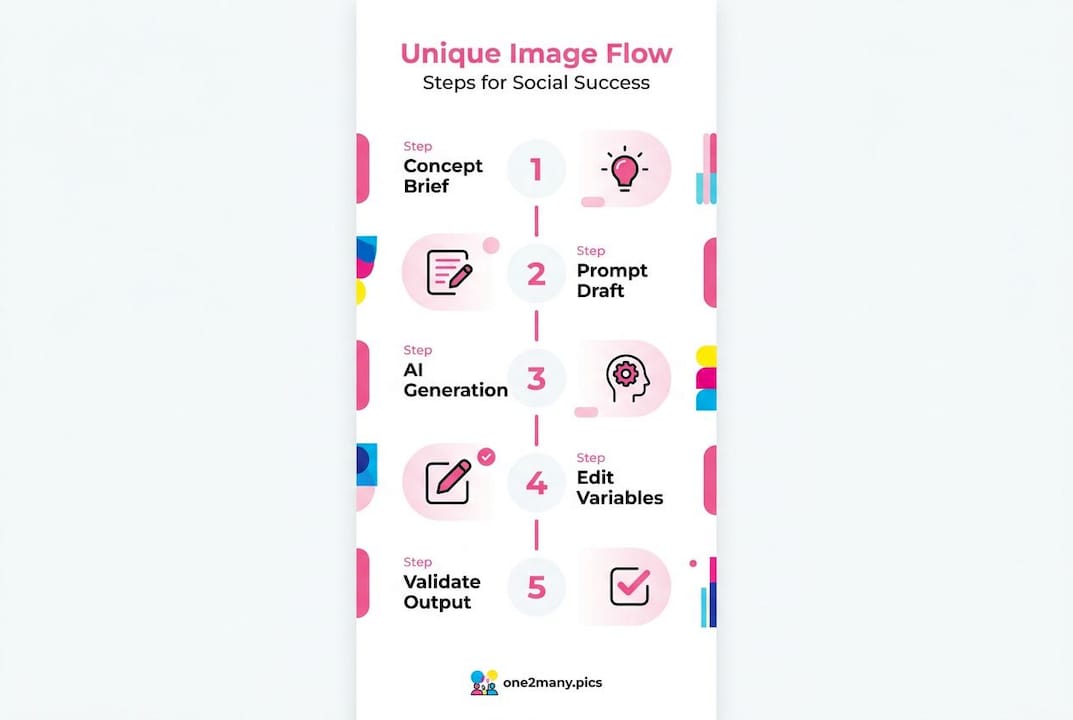

Building a brand-safe workflow for unique visuals

Generating unique images is only half the job. The other half is building a repeatable, brand-consistent workflow that holds up legally, stays within platform terms, and produces content your audience actually connects with.

Start by treating every prompt as a structured specification rather than a casual sentence. A complete prompt spec should include: the primary subject, the desired composition or framing, the visual style and aesthetic, the mood or emotional tone, and any technical parameters like aspect ratio or resolution. Casual prompts like "a woman drinking coffee in a city" produce inconsistent results at scale. Structured prompts like "close-up portrait, professional woman, warm golden hour lighting, editorial style, soft bokeh background, 4:5 aspect ratio" give you far more reproducible brand-aligned outputs.

Build and maintain a prompt library. Document your best-performing prompts, the settings used, the model version, and the output date. This library becomes your creative asset over time. It also protects you if you need to demonstrate originality or creative intent.

Key steps for a brand-safe workflow:

- Separate prompt libraries by content pillar: Product, lifestyle, educational, and promotional content each need their own prompt families to maintain visual consistency

- Run compliance checks before bulk publishing: Review a sample from every batch for platform term compliance and brand guideline alignment

- Add human editorial review as a final gate: Someone on your team should approve batches before they go live, especially for credibility-sensitive campaigns

- Track which outputs perform best: Feed performance data back into your prompt library to refine future generations

Structured prompt specifications are essential for achieving scalable uniqueness without losing brand coherence. This is especially important for agencies managing multiple client accounts simultaneously.

The legal side also requires attention. U.S. copyright law currently requires human authorship for copyright registration, which means fully AI-generated images may not be protectable under your brand. Incorporating meaningful human creative decisions into the generation and selection process helps establish authorship. Always review platform-specific terms of service, since some restrict commercial use of AI-generated content in specific formats.

Refer to this breakdown on safe unique image creation and strategies to protect creative work when designing your workflow for multiple platforms.

Pro Tip: Before publishing any AI-generated image commercially, save documentation of your creative decisions: the prompt, the model version, the edits made, and the human review notes. This paper trail can be critical if authorship or originality is ever questioned.

Best practices for validating and iterating image outputs

Getting to a polished unique image is not a one-shot process. The most effective creators and marketers treat AI image generation as a production pipeline with built-in quality checks rather than a tap you turn on and walk away from.

Start your validation process by benchmarking your chosen tools against your specific content needs. Test for prompt adherence (does the output match what you described?), text accuracy (if your image includes text, is it legible and correct?), visual consistency across a batch, and style alignment with your brand. Benchmark data consistently shows that recurring failure modes like distorted hands, garbled text, and inconsistent lighting are model-specific. Knowing your tool's weaknesses lets you build targeted review steps around them.

Key steps to validate AI outputs before publishing:

- Compare five outputs per prompt against your brand style guide

- Check all outputs for metadata and strip identifiable file data before uploading

- Run a reverse image search on a sample to confirm visual uniqueness

- Review for brand consistency: colors, tone, subject alignment

- Confirm aspect ratio and resolution match platform specs

One thing Hootsuite's research on social trends makes clear is that fully replacing human photography with AI images can hurt credibility in certain content categories. Testimonials, behind-the-scenes content, and trust-building posts perform better with authentic human images. AI-generated visuals work best for product showcases, conceptual graphics, and top-of-funnel content where aesthetic impact matters more than personal authenticity.

Building an iteration loop means treating every batch as a learning opportunity. Track which images get the most engagement, what they have in common visually, and which prompts generated them. Over time you develop a feedback cycle that makes your workflow smarter with every posting cycle. Pairing this with insights from smart visual engagement gives you a data-informed edge over creators who are just guessing.

What most guides miss about unique image generation

Most tutorials on AI image generation stop at the technical layer. They explain how to write prompts, adjust settings, and output images quickly. What they rarely address is the bigger picture: technical uniqueness without strategic intent is not enough to grow a real audience or protect your brand.

Platforms are not just checking whether your image is an exact copy. They are building behavioral profiles of accounts. An account that posts 20 nearly identical AI-generated images in a day, even if each one passes a basic originality check, still looks like an automated aggregator to the algorithm. The platform rewards accounts that demonstrate varied, human-feeling creative activity over time, not just technically different pixels.

Here is the contrarian truth we have come to believe through working with creators at scale: over-automating your visual content pipeline is a long-term risk, not a long-term advantage. When everything is AI-generated and optimized for uniqueness at the technical level, you start losing the human texture that actually builds audience loyalty. Real followers notice. Engagement quality drops even when reach stays stable.

The most effective strategy is a hybrid one. Use AI generation and tools like image protection strategies for volume, efficiency, and privacy. Use human-created and editorial content for credibility anchors. Let the two work together rather than replacing one with the other. This balance is what separates creators who scale sustainably from those who burn out their accounts chasing algorithmic shortcuts.

Technical uniqueness is the floor, not the ceiling. Build above it.

Tools to drive your unique image workflow

You have the knowledge to generate meaningful, platform-safe visuals at scale. The next step is making sure the tools you use support both uniqueness and privacy in one streamlined process.

One2Many.pics was built specifically for creators, managers, and agencies who need to post across multiple accounts without triggering duplicate detection or exposing their digital footprint. The platform strips metadata including location data, timestamps, and device identifiers, then generates visual variations that pass platform originality checks. You get a clean, scalable output that protects your privacy and keeps your content visible. From single-image privacy to bulk processing with workflow integrations, the subscription tiers are designed to match where you are in your content operation today.

Frequently asked questions

What causes image suppression on social platforms?

Suppressions occur when images are flagged as duplicate or unoriginal, often due to reposts or superficial edits. Instagram and Meta penalize unoriginal photo posts with reduced visibility, making meaningful transformation the key to avoiding penalties.

How can creators validate the uniqueness of their images?

Creators should benchmark AI outputs against platform-specific criteria, checking prompt adherence, text accuracy, and visual consistency before publishing at scale. Benchmark findings confirm that marketers must test against their own use cases rather than rely on a single "best model" claim.

Are AI-generated images legally safe for brand use?

Not always. U.S. copyright law requires human authorship for copyright registration, and platform terms can restrict how AI outputs are used commercially, making an IP-aware workflow essential.

What is the best method for scaling unique social visuals?

Using structured prompts and maintaining a prompt library allows for consistent, scalable image generation. Prompt structure and libraries are the foundation of any sustainable high-volume unique image workflow.

Should human photos be replaced by AI images in all cases?

No. For authenticity-driven content like testimonials or behind-the-scenes posts, human images are more credible. Social trend research recommends balancing AI-generated visuals with human editorial judgment for authenticity perception.